OCLC Research conducted an international linked data survey for implementers between 7 July and 15 August 2014. This is the fourth post in the series reporting the results.

OCLC Research conducted an international linked data survey for implementers between 7 July and 15 August 2014. This is the fourth post in the series reporting the results.

By far, the two main reasons why the 51 linked data projects/services described in the survey that publish linked data are: to expose their data to a larger audience on the Web (45) and to demonstrate what could be done with their datasets as linked data (41). Other reasons in descending order: heard about linked data and wanted to try it out by exposing their data as linked data; to improve Search Engine Optimization (SEO); the institution’s administration requested that the data be exposed as linked data; a national initiative; needing to consume their own linked data; making data available to suppliers; creating a research environment; wanting to make metadata open for future European legislative compliance; wanting to support the schema.org initiative and represent “things not strings”.

The types of data published as linked data by the described projects (in descending order):

- Descriptive metadata

- Bibliographic data (e.g., MARC records)

- Authority files

- Digital collections

- Encoded Archival Descriptions/Archival finding aids

- Ontologies/controlled vocabularies

- Statistical data

- Data about museum objects

- Geographic data

- Holdings data

The largest linked data datasets reported in descending order:

| Project/Service | Source | Size |

| WorldCat.org | OCLC | 15 billion triples |

| WorldCat.org Works | OCLC | 5 billion triples |

| Europeana | Europeana Foundation | 3,798,446,742 triples for the pilot LOD service |

| The European Library | The European Library | 2,131,947,229 triples |

| Linked Open Data | Research Libraries UK | 936,054,853 triples |

| Opendata | Charles University in Prague | 750 million triples |

| Semantic Web Collection | British Museum | 100-500 million triples |

| Drug Encyclopedia | Charles University in Prague | 100-500 million triples |

| Cedar project | DANS | 100-500 million triples |

| id.loc.gov | Library of Congress | 100-500 million triples |

| British National Bibliography | British Library | 50-100 million triples |

| British Art collection | Yale Center for British Art | 57 million triples |

Licenses: 16 projects/services do not announce any explicit license. Of the ones that do, CC0 1.0 Universal is the most common (15), followed by Public Domain Dedication and License or PPDL (7). A few apply Open Data Commons Attribution (ODC-BY), Open Data Commons Open Database License (ODC-ODbl) or ODC-ShareAlike Community Norms. Other licenses mentioned: ODC-BY-SA (Attribution-ShareAlike), but considering ODC-BY; Creative Commons Attribution-NonCommercial-NoDerivatives (BY-NC-ND); French State Open License-based; and a link to a specific set of data services terms.In addition, the Digital Public Library of America reported its dataset as 50-100 million triples, but it’s not in production yet.

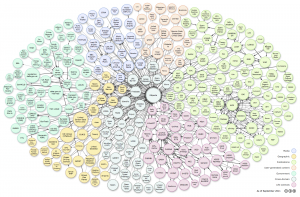

RDF Vocabularies and Ontologies: The RDF Vocabularies and Ontologies used the most:

- SKOS – 38

- FOAF – 30

- Dublin core terms – 29

- Dublin core – 27

- Schema.org – 22

Here’s the alphabetical list of the RDF Vocabularies and Ontologies used; those that include uses by Dewey, FAST, ISNI, VIAF, WorldCat.org and WorldCat.org Works are asterisked.

| RDF Vocabulary & Ontology | # ofProjects | Dewey | FAST | ISNI | VIAF | WorldCat.org | WorldCat.org Works | |

| Archival ontology | 1 | |||||||

| Bibframe | 6 | |||||||

| Bibliographic Ontology | 14 | |||||||

| Biographical Ontology | 7 | |||||||

| British Library Terms | 4 | |||||||

| Dublin Core | 27 | * | * | * | ||||

| Dublin Core Terms | 29 | * | * | * | ||||

| EAC-CPF | 2 | |||||||

| European Data Model Vocabulary | 8 | |||||||

| Event Ontology | 4 | |||||||

| FOAF | 30 | * | * | * | * | * | ||

| ISBD | 4 | |||||||

| Organization Ontology | 10 | |||||||

| RDA | 10 | * | * | |||||

| Schema.org | 22 | * | * | * | * | |||

| SKOS | 38 | * | * | * | * | * | ||

| WGS84 Geo Positioning | 11 | * | * | |||||

| Other | 34 | * | * | * |

Other vocabularies or ontologies cited:

- CERIF semantic vocabularies

- CIDOC-CRM (8 projects mentioned this)

- Data Catalog Vocabulary

- DPLA metadata application profile

- Ecological Metadata Language (EML)

- Fabio (FRBR-aligned bibliographic ontology)

- FRBR

- FRBRer model

- ISNI

- Local vocabulary

- MADS/RDF

- MARC RDF

- Metadata Object Description Schema

- Music Ontology

- nomisma.org ontology

- OAI ORE Terms

- OSEGO

- OWL

- Owl 2 Web Ontology Language

- Purl.org/library

- Radatana

- rdaGr2

- RDF Schema

- Reviews Ontology

- Semantic Web for Research Communities

- SIOC (Semantically-Interlinked Online Communities)

- viaf.org/ontology

- VIVO Core

Barriers or challenges encountered in publishing linked data

Learning enough:

- Little documentation or advice on how to build the systems, so had to wing it.

- Steep learning curve for staff to get to grips with Semantic Web technologies.

- So many serializations and understanding how/when to use them.

- Developing technology made it challenging to select options or identify best practice.

- Determining the workflow – lots of planning, discussion, trial and error, looked at what was done for other projects.

- The audience – social historians – which is supposed to use them is usually not trained to query SPARQL endpoints.

- Learning the various RDF serializations and writing XSLT to convert data into those serializations took some time. One of the largest challenges was not having examples of similar small-scale projects from other libraries to use as a reference.

- Selecting appropriate ontologies to represent our data. We worked closely with our Metadata department on choosing ones that we agreed were the most appropriate, or widely used.

- This particular project hinges on the curators describing the relationships, and it can be difficult to explain the Linked Data framework to non IT/LD experts.

- Dealing with multiple agencies which were exporting and then importing the metadata; the former had no experience working with bibliographic linked data, and the latter were run by non-librarians. This led on occasion to misunderstandings of intention and purpose.

Data cleanup:

- Inconsistency in legacy data – the project highlighted some issues with legacy data. This provided us with an opportunity to enhance our legacy data in order to improve conversion.

- It has been very difficult to disaggregate data correctly, until we switched to Open Refine.

- How to handle version control

- Cleaning the data. Establishing the links; we did this partially automatically (by string matching) with manual correction.

Technical issues:

- It can be difficult to ascertain who owns data; we have had to remove one dataset…because its owners changed their minds about publishing it.

- A lot of software is not mature, so there is a challenge to keep up with updates, changing components as better software becomes available.

- Size of the file makes it difficult to consume.

- At the moment the end-user tools for data entry and annotation of knowledge graphs are sparse. Also again, there are no tools in production for data modeling that do not require heavy IT support.

- To allow our data to be used outside of the library industry, we need to provide our data in a widely understood descriptive vocabulary. SKOS is too library specific for this. We have chosen schema.org for most of the information, but its orientation is more commercial than descriptive, which makes the data less useful.

- Integration with existing web app infrastructure.

[Originally posted 2014-09-03, updated 2014-09-04]

Coming next: Linked Data Survey results–Technical details

Karen Smith-Yoshimura, senior program officer, topics related to creating and managing metadata with a focus on large research libraries and multilingual requirements. Karen retired from OCLC November 2020.

4 Sept 2014 update incorporated responses from Research Libraries UK and The European Library.